Generative AI instruments equivalent to Midjourney, Secure Diffusion, and DALL-E 2 have astounded us with their capacity to supply exceptional photographs in a matter of seconds.

Regardless of their achievements, nevertheless, there stays a puzzling disparity between what AI picture turbines can produce and what we are able to. As an example, these instruments usually received’t ship passable outcomes for seemingly easy duties equivalent to counting objects and producing correct textual content.

If generative AI has reached such unprecedented heights in inventive expression, why does it battle with duties even a main college pupil might full?

Exploring the underlying causes helps sheds gentle on the advanced numerical nature of AI, and the nuance of its capabilities.

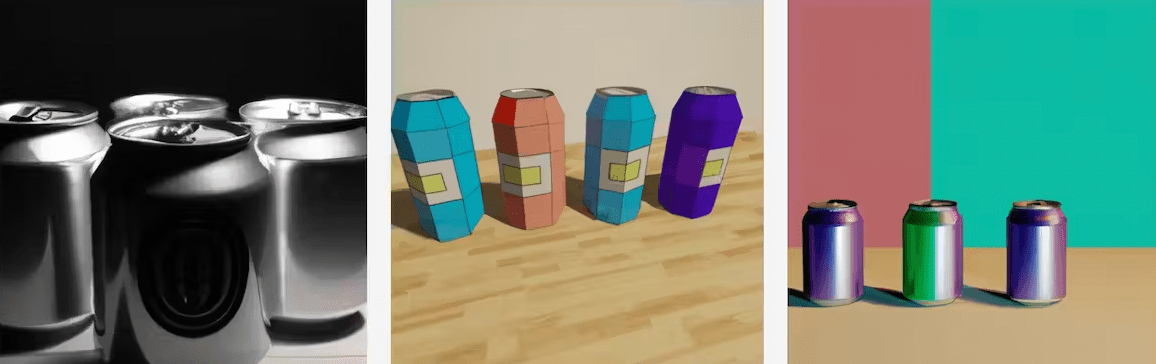

AI’s limitations with writing

People can simply acknowledge textual content symbols (equivalent to letters, numbers, and characters) written in numerous totally different fonts and handwriting. We are able to additionally produce textual content in several contexts, and perceive how context can change which means.

Present AI picture turbines lack this inherent understanding. They don’t have any true comprehension of what textual content symbols imply. These turbines are constructed on synthetic neural networks skilled on large quantities of picture information, from which they “be taught” associations and make predictions.

Combos of shapes within the coaching photographs are related to numerous entities. For instance, two inward-facing traces that meet would possibly signify the tip of a pencil or the roof of a home.

However relating to textual content and portions, the associations should be extremely correct, since even minor imperfections are noticeable. Our brains can overlook slight deviations in a pencil’s tip or a roof – however not as a lot relating to how a phrase is written, or the variety of fingers on a hand.

So far as text-to-image fashions are involved, textual content symbols are simply combos of traces and shapes. Since textual content is available in so many various kinds – and since letters and numbers are utilized in seemingly infinite preparations – the mannequin usually received’t discover ways to successfully reproduce textual content.

The principle cause for that is inadequate coaching information. AI picture turbines require rather more coaching information to precisely signify textual content and portions than they do for different duties.

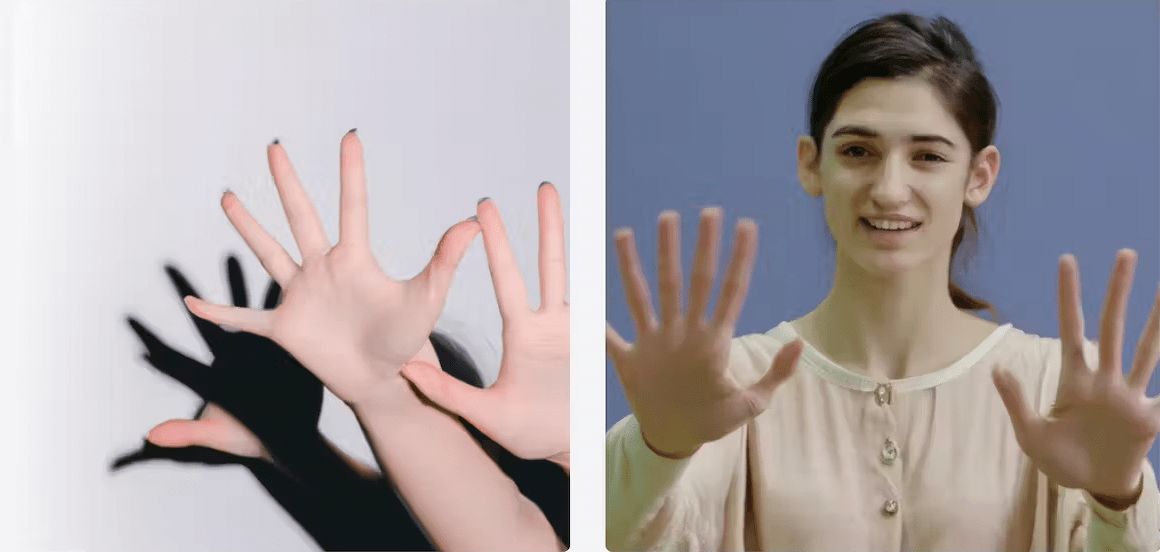

The tragedy of AI arms

Points additionally come up when coping with smaller objects that require intricate particulars, equivalent to arms.

In coaching photographs, arms are sometimes small, holding objects, or partially obscured by different components. It turns into difficult for AI to affiliate the time period “hand” with the precise illustration of a human hand with 5 fingers.

Consequently, AI-generated arms usually look misshapen, have further or fewer fingers, or have arms partially lined by objects equivalent to sleeves or purses.

We see an analogous situation relating to portions. AI fashions lack a transparent understanding of portions, such because the summary idea of “4.” As such, a picture generator could reply to a immediate for “4 apples” by drawing on studying from myriad photographs that includes many portions of apples – and return an output with the inaccurate quantity.

In different phrases, the massive variety of associations inside the coaching information impacts the accuracy of portions in outputs.

Will AI ever be capable of write and rely?

It’s necessary to recollect text-to-image and text-to-video conversion is a comparatively new idea in AI. Present generative platforms are “low-resolution” variations of what we are able to anticipate sooner or later.

With developments being made in coaching processes and AI know-how, future AI picture turbines will probably be rather more able to producing correct visualizations.

It’s additionally price noting most publicly accessible AI platforms don’t provide the best degree of functionality. Producing correct textual content and portions calls for extremely optimized and tailor-made networks, so paid subscriptions to extra superior platforms will probably ship higher outcomes.

This text is republished from The Dialog underneath a Inventive Commons license. Learn the authentic article by Seyedali Mirjalili, Professor, Director of Centre for Synthetic Intelligence Analysis and Optimisation, Torrens College Australia.